You bring a catalog. We tune a domain-specific search model on it. You get a hosted /search endpoint. Integrate like Algolia — except your search actually understands Hindi, Hinglish, misspellings, and brand-as-generic queries.

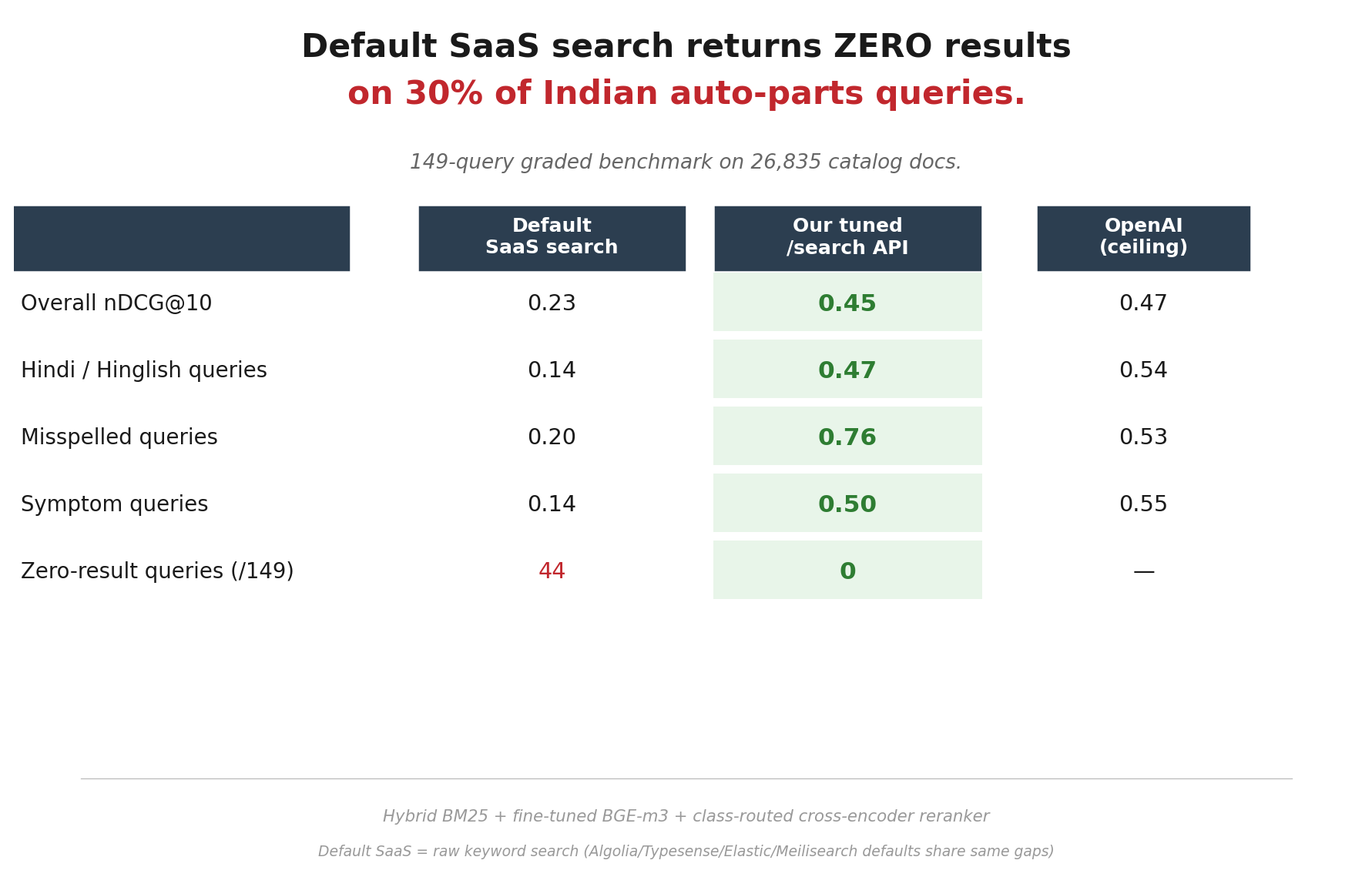

| Default SaaS | Our /search | OpenAI | |

|---|---|---|---|

| Overall nDCG@10 | 0.23 | 0.45 | 0.47 |

| Hindi / Hinglish | 0.14 | 0.47 | 0.54 |

| Misspelled queries | 0.20 | 0.76 | 0.53 |

| Symptom queries | 0.14 | 0.50 | 0.55 |

| Zero-result queries | 44 / 149 | 0 / 149 | — |

Default SaaS = raw keyword search. Algolia, Typesense, Elastic, Meilisearch Cloud all behave similarly out-of-the-box on Indian catalogs. Numbers are Meilisearch-default as category proxy.

Mid-market e-commerce and marketplace teams in India running catalogs where:

Stack: fine-tuned BGE-m3 multilingual embedder (open source, domain-adapted on HSN + ITI + NHTSA data) + Meilisearch BM25 with custom Indic tokenizer + 2,700-pair Hinglish bridge dictionary + class-routed cross-encoder reranker. Hybrid RRF fusion, tuned per query class.

All open-source base components. No proprietary embedding API dependency.

The auto-parts endpoint is a reference implementation. The actual product I'm chasing: make catalog-search tuning agentic. You bring a catalog and 30 example queries; AI agents (Claude + Codex) walk the same 11 stages end-to-end; human in the loop only for taste decisions.

Playbook-as-code. I ran the auto-parts version manually so the playbook could exist. Read the 11-stage playbook.

If you're running catalog search in India and your users are frustrated — I'll run a free benchmark on your data. Send a CSV sample + 20-30 example queries real customers type.